By cesar-rgon

This advanced script installs Xfce desktop and a set of programs according to user needs starting from an Ubuntu Server base system.

Main features

Unattended installation of the Xfce desktop and selected applications by user.

Error Log during the installation process.

Ability to shutdown, restart or show error log at the end of the installation process.

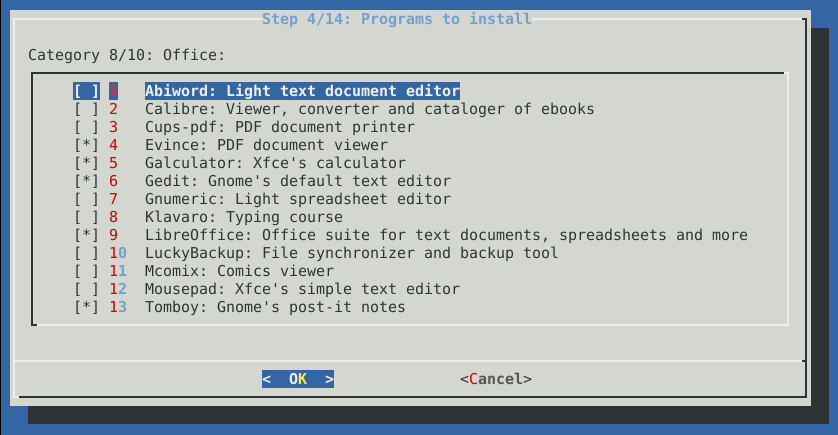

It offers a great variety of programs of different types.

Automatic configuration of applications to be ready to use them.

Multi-lingual support: english and spanish texts included in script.

Why use this script over other alternatives?

Not a distro. It’s a script. Quick to download it and use it.

It’s valid for homes, offices and servers.

It can be installed on different versions of Ubuntu: 12.04 and 13.04.

Lower consumption of system hardware resources.

Greater customization of applications to install.

Ubuntu Server offers more maintenance period than a conventional Ubuntu desktop version.

Save configuration time after the installation proccess.

More dynamic, it offers applications from different desktops. Not limited only to Xfce desktop.

Modern desktop themes (Faenza icons and GreyBird theme).

Automatic installation of third-party repositories.

For more information you can visit the script website or facebook webpages.

Sorry i can’t post URL according to forum rules until i have at least five posts.

You can find the github project searching in google with keywords “xfce installer” and the link is “cesar-rgon/xfce-installer”

In github project page, you can find the URL of the web page.

In the script website you can find full information, screenshots, installation steps and facebook links.

I hope find usefull this script.

Regards.